Is AI Making Us Dumb?

What the research tells us

Writing will produce forgetfulness in the minds of those who learn to use it, because they will not practice their memory. … You have invented an elixir not of memory, but of reminding; and you offer your pupils the appearance of wisdom, not true wisdom.

— Socrates

Socrates got it wrong. So did the catastrophizers who warned us about radio, calculators, TV, and even search engines. Google didn’t make us that dumb, so the logic follows that AI won’t make us dumb either. This is false comfort. The conviction that history always repeats itself is getting in the way of our realizing that this time is actually different.

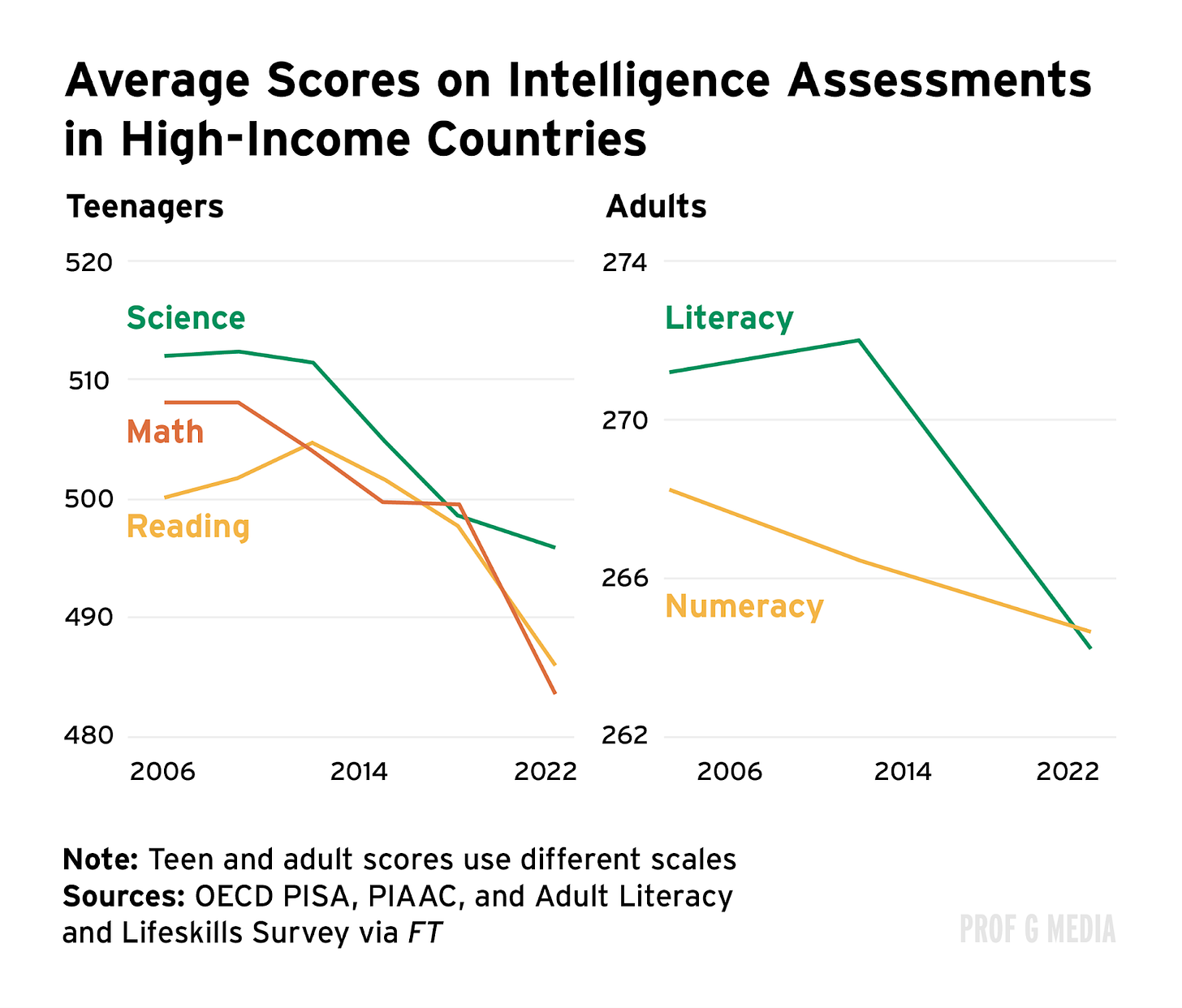

Last month, Dr. Jared Cooney Horvath, a neuroscientist and educator, told Congress that since the 1800s, every generation has been smarter than their parents’ — until Gen Z. Specifically, Horvath said, Gen Z underperforms “on basically every cognitive measure” ranging from executive function and literacy, to general IQ, memory, and attention skills.

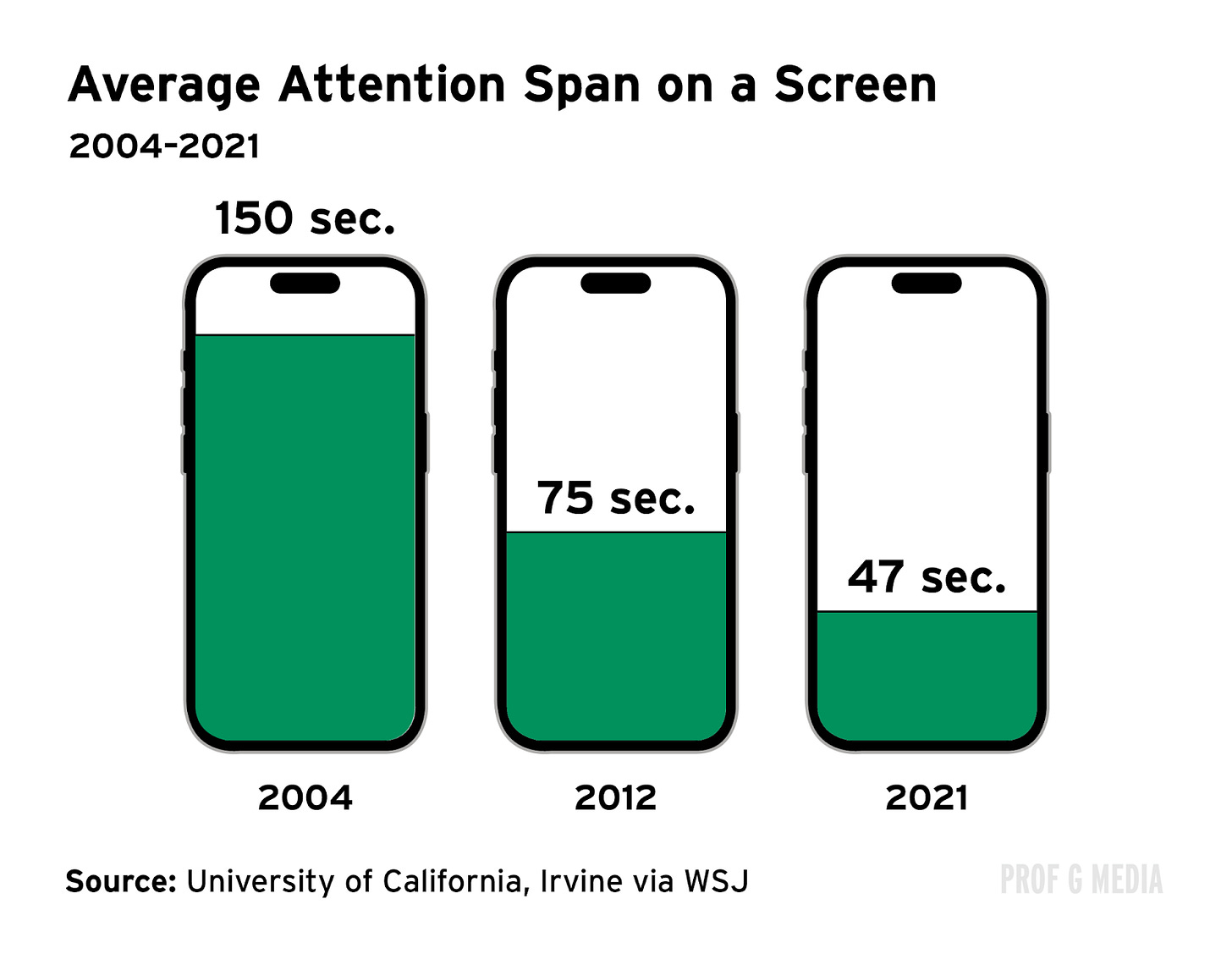

We can’t fully blame AI. After all, it’s only been with us for the last four years. This decline began with the internet and got worse with smartphones. What happened? Instant gratification and constant multitasking became the new normal, making it nearly impossible for people to concentrate for longer than 47 seconds. Getting smarter requires being able to pay attention, and we’re increasingly distracted.

It’s not just Gen Z. Adults have gotten less intelligent too, and AI threatens to make this worse.

Study after study has shown that when we don’t use skills, we lose them. AI isn’t just a calculator or a search engine — it’s a critical thinking machine that can do pretty much anything we ask it. Consistently outsourcing the process of thinking … will change our brains and change our society — for the worse.

We need to be talking about this more

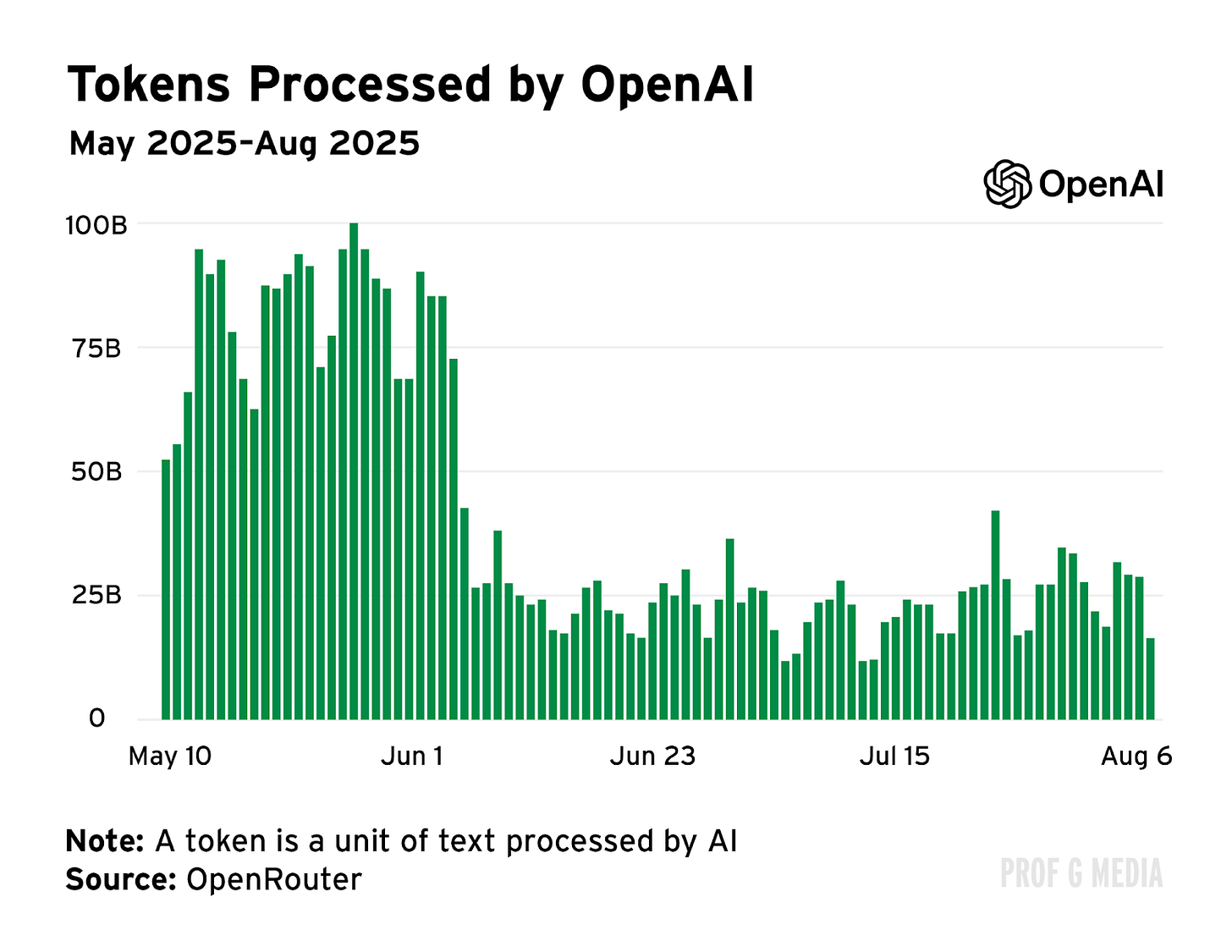

More than 90% of college students used AI for schoolwork in 2025. Eighty-four percent of high schoolers use it. Chat volume on OpenAI dropped precipitously when summer break started last year.

AI is as pervasive in schools as backpacks, and it’s not just because the technology is good at writing five-paragraph essays. AI companies know that the pressure to perform makes students sitting ducks.

Anthropic pays “campus representatives” $1,750 for 10 weeks of promoting Claude. During finals season last spring, both OpenAI and Google offered their Pro tiers to college students for free.

Instructors don’t have the tools they need to regulate AI use in school. Schools are spending thousands on AI detection software, but studies show that the detection tools on the market don’t work. If only there were an effective way to determine what has been created by AI and what hasn’t.

Oh wait, there is. OpenAI invented a technology that was 99.9% accurate in detecting AI-generated work years ago but decided not to release it because they were worried it would discourage usage.

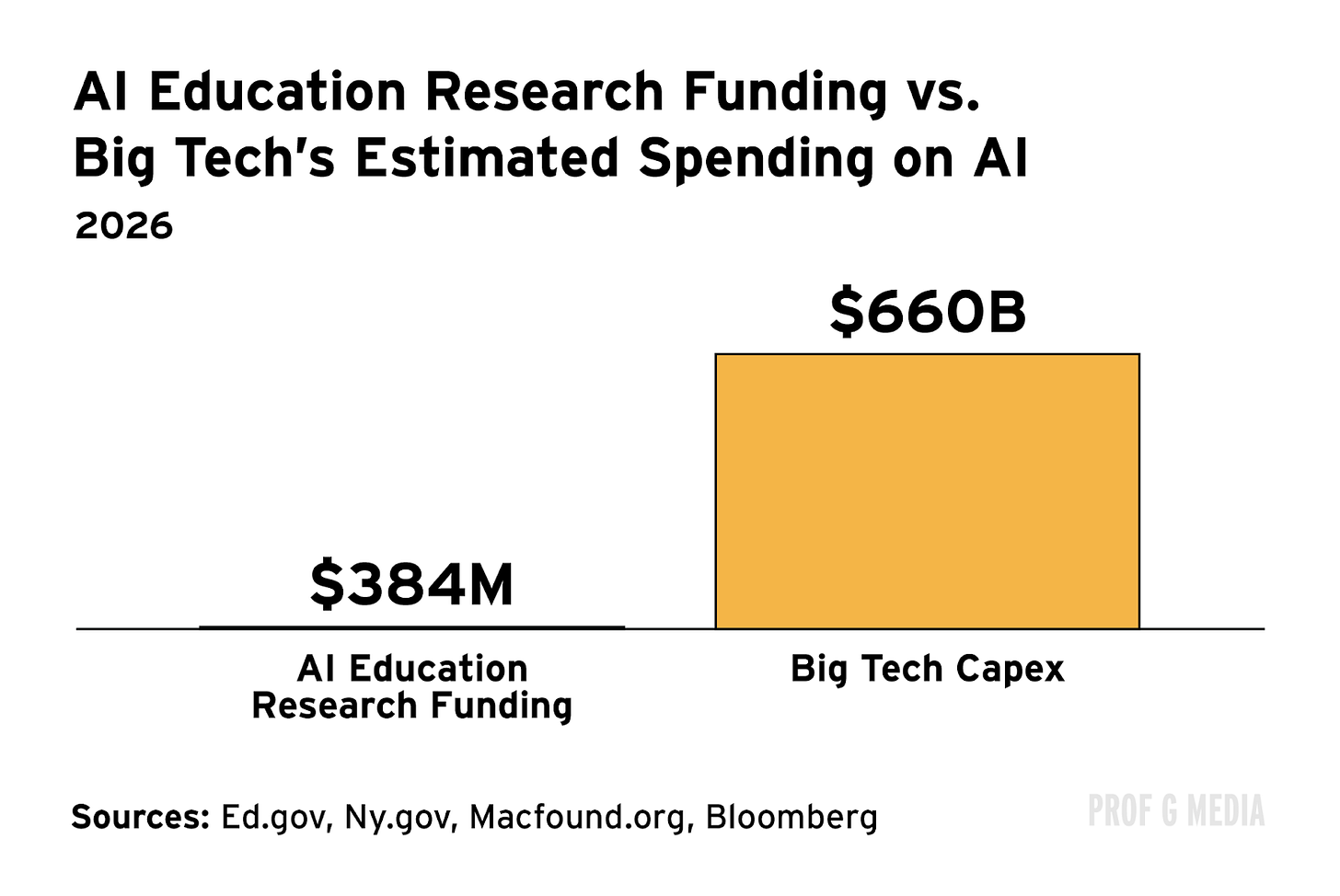

As much as we’d like companies to self-regulate, we know they don’t. Our elected representatives need to step in — but they’re not. How many laws have been passed? Only two — Tennessee and Ohio both passed legislation requiring school districts to develop their own AI policies. How much money is being spent on studies investigating AI’s impact on learning?

The state of intelligence today

IQ scores have steadily increased over time — until now. This is known as the Flynn Effect. As society modernizes, nutrition and healthcare improve, children spend more time in school, and people engage in more cognitively challenging tasks … people get smarter.

Scientists didn’t expect this trend would go on forever. In 2007, it was predicted that IQ scores would plateau in the U.S. by 2024. The key word there is plateau. Not decline.

The most recent standardized testing data shows that American students are getting dumber. The reading skills of American high school seniors are the worst they have been in three decades; a third of 12th graders don’t have basic reading skills.

Forty-five percent of 12th graders scored below the “basic” level in math, which means almost 1 in 2 students can’t do the math on how concerning that is.

COVID-related educational disruptions certainly play a role here, but students’ skills were declining before the pandemic. COVID was only an accelerant.

Crucially, key markers of intelligence are on the decline for adults, too. The OECD assessed literacy and math skills of thousands of adults in 31 developed countries. It found that, over the past 10 years, literacy proficiency improved significantly in only two countries (Finland and Denmark), remained stable in 14, and declined significantly in 11.

Of the U.S. data, Andreas Schleicher, director for education and skills at the OECD, said, “30% of Americans read at a level that you would expect from a 10-year-old child.”

What happened?

Attention is predictive of intelligence, and the internet shortened our attention spans.

The problem is that the internet encourages multitasking and normalizes instant gratification.

A review of over 40 studies on media use and attention concluded: “Those who engage in frequent and extensive media multi-tasking in their day-to-day lives perform worse in various cognitive tasks than those who do not, particularly for sustained attention.”

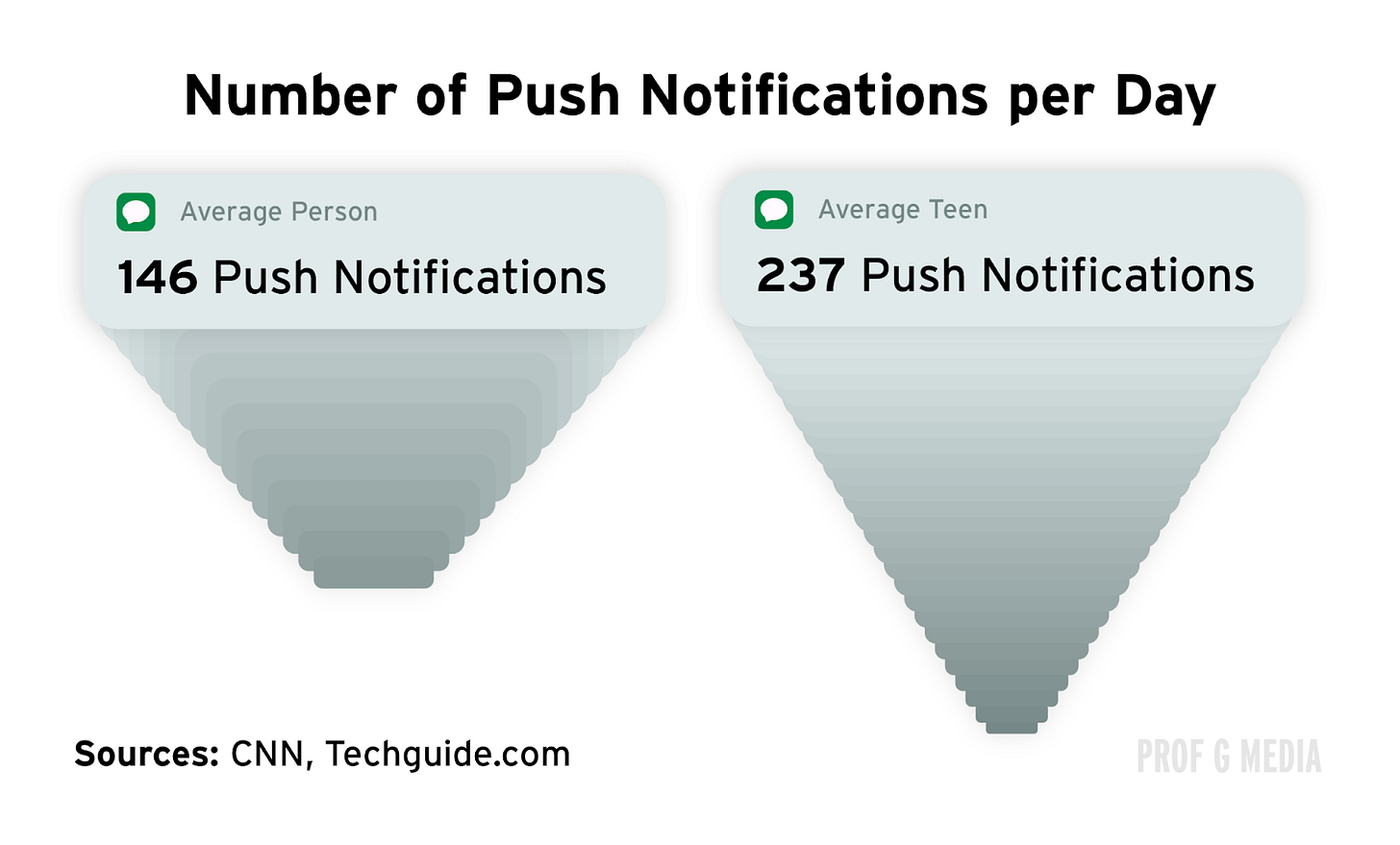

Smartphones only made our attention problem worse by giving us access to unlimited information and reminding us of it 146 times per day, on average. Now, 60% of teens go on their phones while watching TV.

It’s gotten so bad that, according to Matt Damon, Netflix wants writers to “restate the plot” three or four times in movie dialogue to account for distracted viewers.

We’ve become goldfish — constantly flitting between one brightly colored rock and another in pursuit of intentions that we’ve already forgotten.

Fast-Forward to Today

If the internet wrecked our attention spans and contributed to a marked decline in general intelligence, what will AI do?

When we outsource tasks to digital tools, we become worse at doing them ourselves. The more cognitively demanding the skill is, the more decay we experience if we don’t practice it.

AI isn’t like (old) Google — it doesn’t just give you information. It takes stuff that is hard — whether it’s analyzing a problem, writing an essay, or making a website — and does it for you.

Doing homework with AI, one teacher described to 404 Media, is like “going to the gym and asking a robot to lift weights for you.”

Psychologists described the consequences: “Artificial intelligence assistants are designed to mimic cognitive skills. … For this reason, consistent and repeated engagement with an AI assistant is likely to lead to greater decrements in skill.”

There haven’t been enough studies on this topic, but I’ll present a couple that do exist.

No. 1: Is it harmful or helpful? Examining the causes and consequences of generative AI usage among university students

Researchers compared the performance of students who used ChatGPT for college work with those who didn’t. They found that students who relied on ChatGPT were more likely to report procrastination, worsening cognitive skills, and lower GPAs.

They wrote: “Active learning … is crucial for memory consolidation and retention. Since ChatGPT can quickly respond to any questions asked by a user, students who excessively use ChatGPT may reduce their cognitive efforts to complete their academic tasks, resulting in poor memory.”

This isn’t just a collegiate problem. An instructor who teaches 18-year-olds gave a particularly bleak assessment of how AI has affected students: “My kids don’t think anymore. They don’t have interests. Literally, when I ask them what they’re interested in, so many of them can’t name anything for me. …They don’t have original thoughts. They just parrot back what they’ve heard in TikToks.”

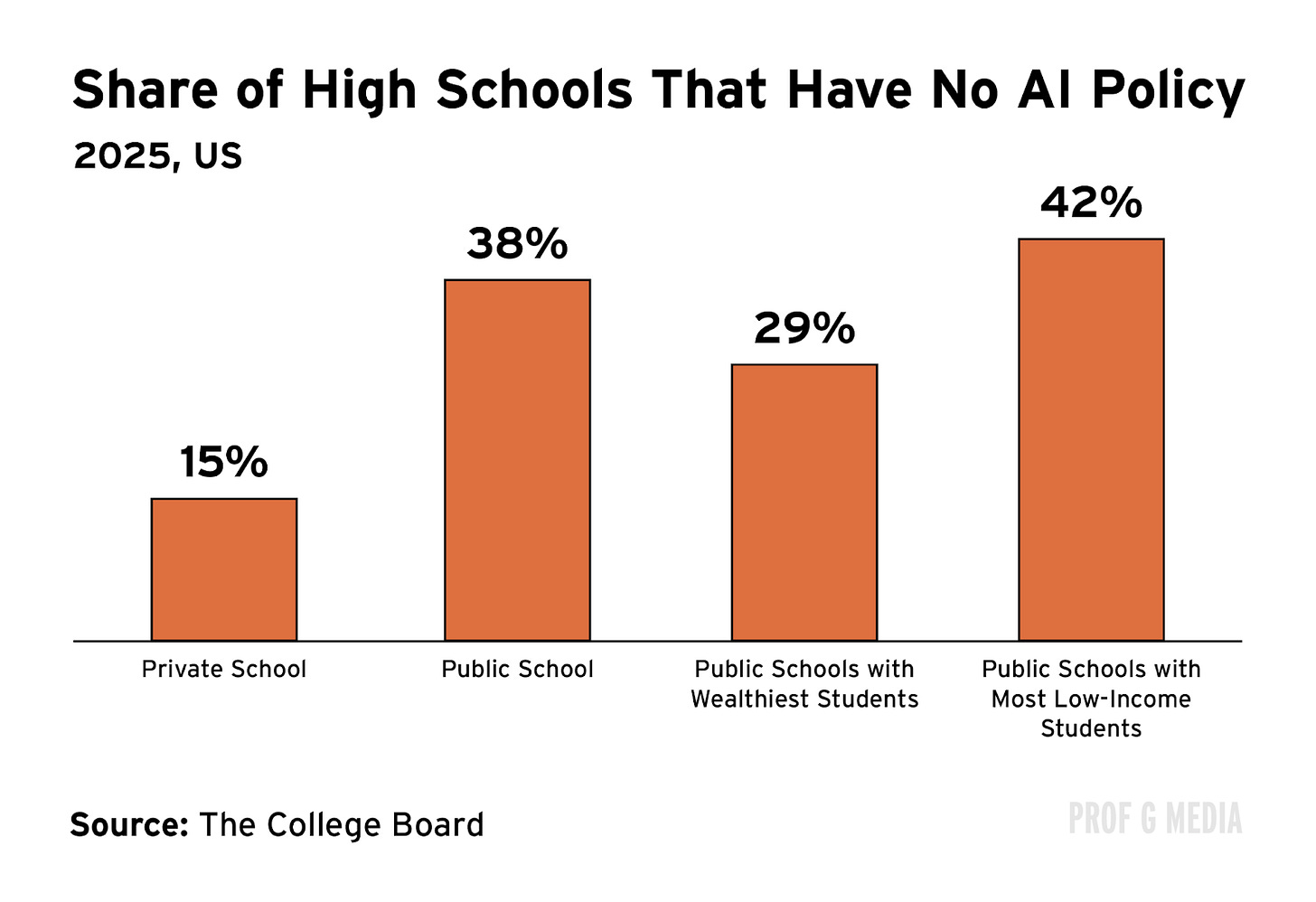

The disadvantages of AI will be distributed unequally. Private schools are more than twice as likely to both permit GenAI use and have policies governing it. Among public schools, those serving more low-income students are least likely to have such policies in place. These differences threaten to exacerbate already bad wealth-based achievement gaps.

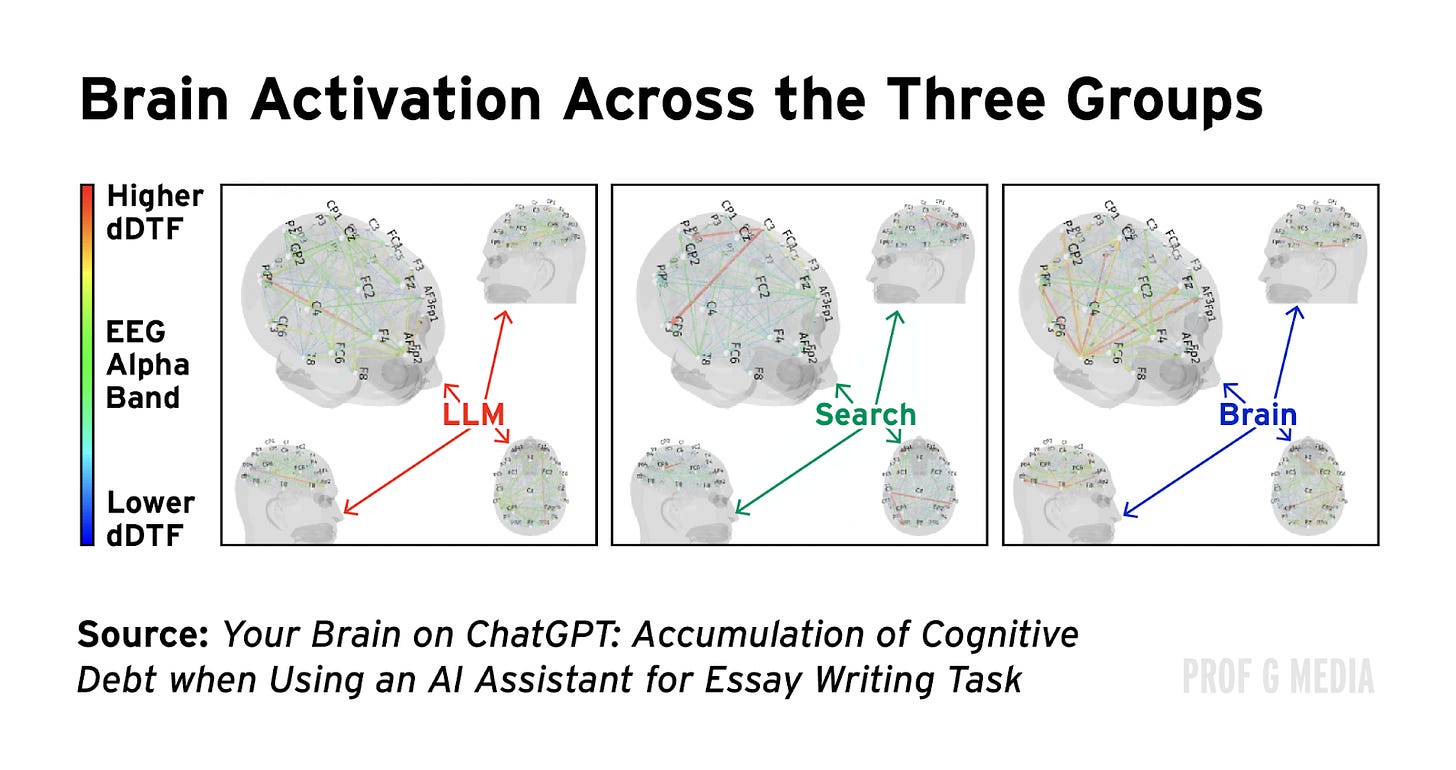

No. 2: Your Brain on ChatGPT: Accumulation of Cognitive Debt When Using an AI Assistant for Essay Writing Task

When we use AI to write, we literally use our brains less.

Researchers had students write essays using a search engine, a large language model (LLM), or nothing. The group writing with the LLM had 55% reduced brain activity and wrote essays that lacked creativity and were largely judged to be “soulless.”

The study’s authors concluded: “AI tools, while valuable for supporting performance, may unintentionally hinder deep cognitive processing, retention, and authentic engagement with written material.”

But writing isn’t even the most important skill that AI threatens to atrophy.

Large language models are question-answering machines. You can ask them anything, and they will give you a convincing answer.

The problem is, thinking through a problem and coming to what you believe is the best answer is a really important skill. It’s called critical thinking.

According to Wikipedia, critical thinking is the process of analyzing available facts, evidence, observations, and arguments to reach sound conclusions or informed choices. It involves recognizing underlying assumptions, providing justifications for ideas and actions, evaluating these justifications through comparisons with varying perspectives, and assessing their rationality and potential consequences.

No. 3: AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking

This survey of business school students found a significant negative correlation between frequent AI tool usage and critical thinking abilities, with younger students exhibiting more dependence on AI and lower critical thinking skills.

Over time, continued reliance on AI for critical thinking worsens our ability to do it ourselves. One survey participant admitted as much: “I rely so much on AI that I don’t think I’d know how to solve certain problems without it.”

No. 4: The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects From a Survey of Knowledge Workers

This study (ironically from Microsoft) found that when knowledge workers were more confident in AI tools, they employed less of their own critical thinking.

Why is critical thinking important? People with strong critical thinking skills are better able to understand complexity, analyze text, construct rational arguments, solve problems, and consider alternative solutions. People who score higher on critical‑thinking measures are reliably better at questioning and evaluating whether information is true.

As a professor at the University of California, Irvine, wrote for The New York Times, “At stake are not just specialized academic skills or refined habits of mind but also the most basic form of cognitive fluency. … This means [students who rely on AI] will lack the means to understand the world they live in or navigate it effectively.”

Variance

I used to use AI more. For the first two years of its existence, I subscribed to the “AI won’t take your job, someone who uses AI will” anthem. Scott and many others have espoused this view. But then I realized that I was focusing on the wrong thing.

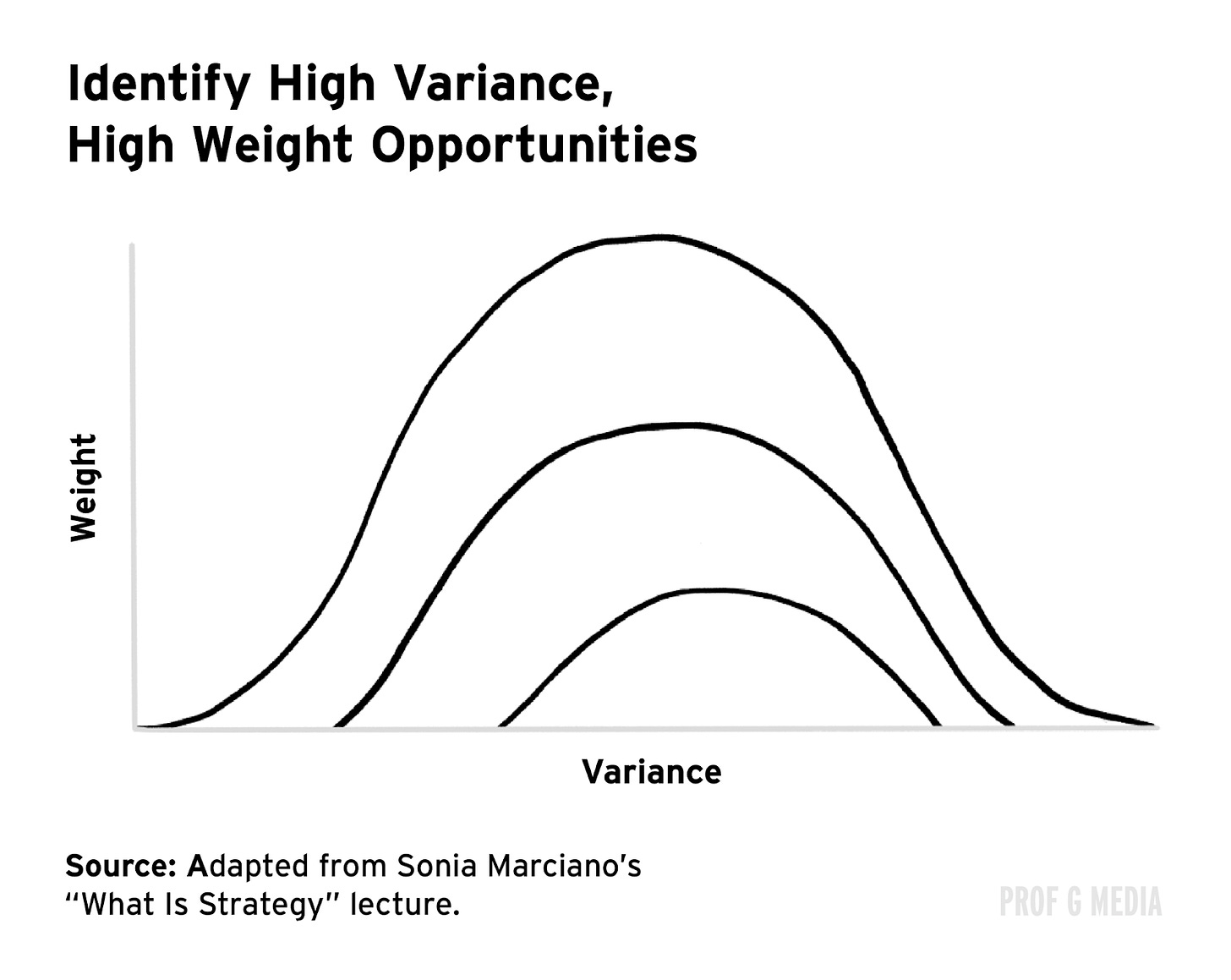

NYU professor Sonia Marciano coined the idea of variance: To achieve success, the best strategy is to focus on the areas where there are the biggest gaps between the best and the worst. Scott has written about it multiple times.

The concept of variance instructs us to become really good at things that are both important and hard. As I’ve written about before, using AI isn’t hard. Not using AI is hard. Writing a thesis-driven essay or a scientific report by yourself is hard. Coming up with an original perspective on something you’ve read is hard. Critical thinking is hard.

I’m not a Luddite — I believe that AI is a remarkable and inevitable technology, and everyone needs to understand how to use it. It’s also just a really convenient time-saver. But what’s not being acknowledged is that while AI might technically be free, it has a hidden price. If you want or need to outsource your work to AI, you should at least understand what you’re paying.

The cost of AI is your own intelligence.

Regarding AI as an available tool is probably the better way to think of it. Just outsourcing it to do your work, means your boss can do the same and stop paying you. It seems to me, one of the most important skill sets moving forward will be the critical thinking necessary to know (or at least suspect) when AI is making stuff up or outright hallucinating.

Using AI to write is such an obvious way to not use one’s mental faculties. I think we don’t even need studies to prove the downside of outsourcing the messy parts of writing or for that matter, creating any piece of written or visual artifact. The sad reality is that businesses are making it mandatory for employees to use AI (or automate what shouldn’t be automated) even if they don’t wish to.